Reka Edge

Frontier–level visual intelligence. Optimized for deployment. Engineered for Speed.

Reka Edge

Leading Performance

across multimodal benchmarks

2.4x

2.4× faster on average across requests

Easy Deployment

Hugging Face • vLLM

Open Source

Weights available on Hugging Face

[Reka Edge]

Reka Edge is the fastest vision language model in the 7B - 8B class designed for real world systems. Our model process videos and images with fewer tokens, which translates to concrete reductions in latency and memory footprint.

Perfect for Edge Deployment

Reka Edge achieves up to 2.4× lower latency on single requests.

Perfect for Edge Deployment

Reka Edge 2 is built on

660M parameter ConvNeXT V2 vision encoder for efficient visual processing

6B parameter language backbone for reasoning and generation

7B total parameters for both high-end performance and resource efficiency

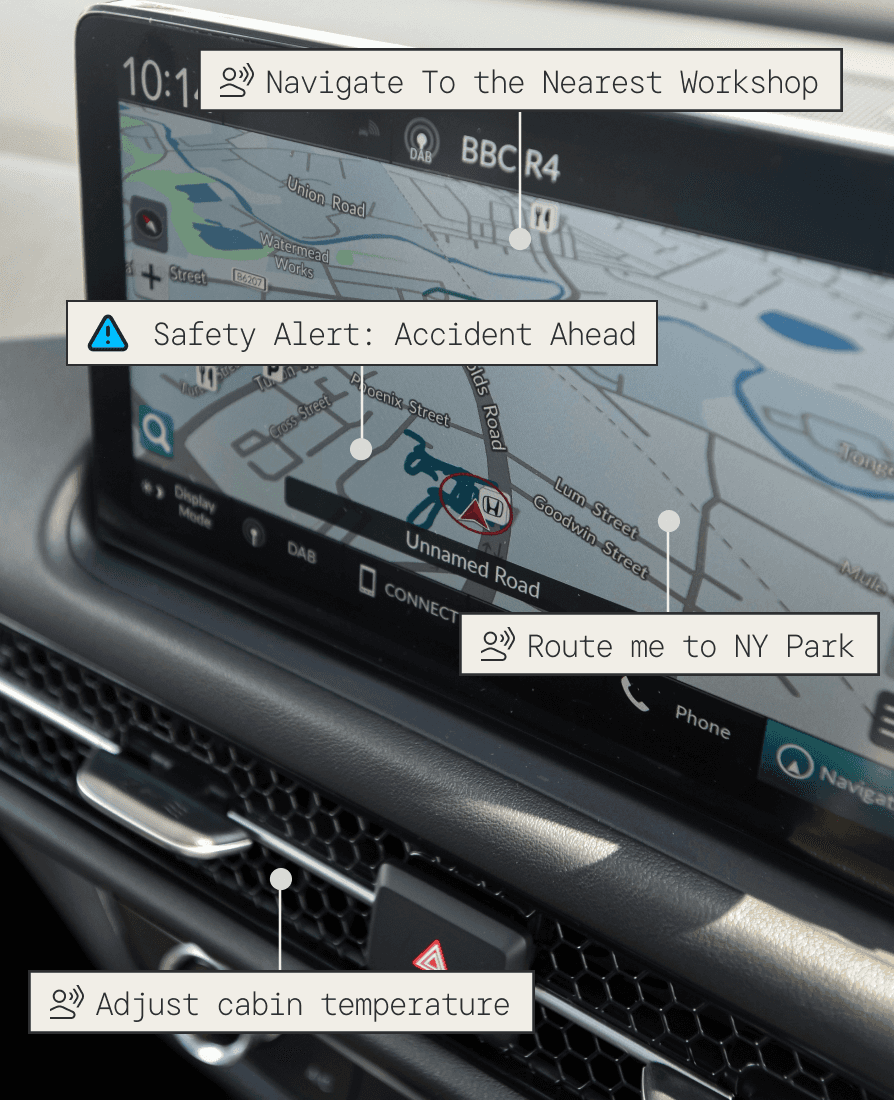

Reka Edge powers the next generation of Physical AI. Reka Edge is designed for production environments where speed and reliability matter, from robotics. to real-time surveillance, and agentic systems.

Perfect for Edge Deployment

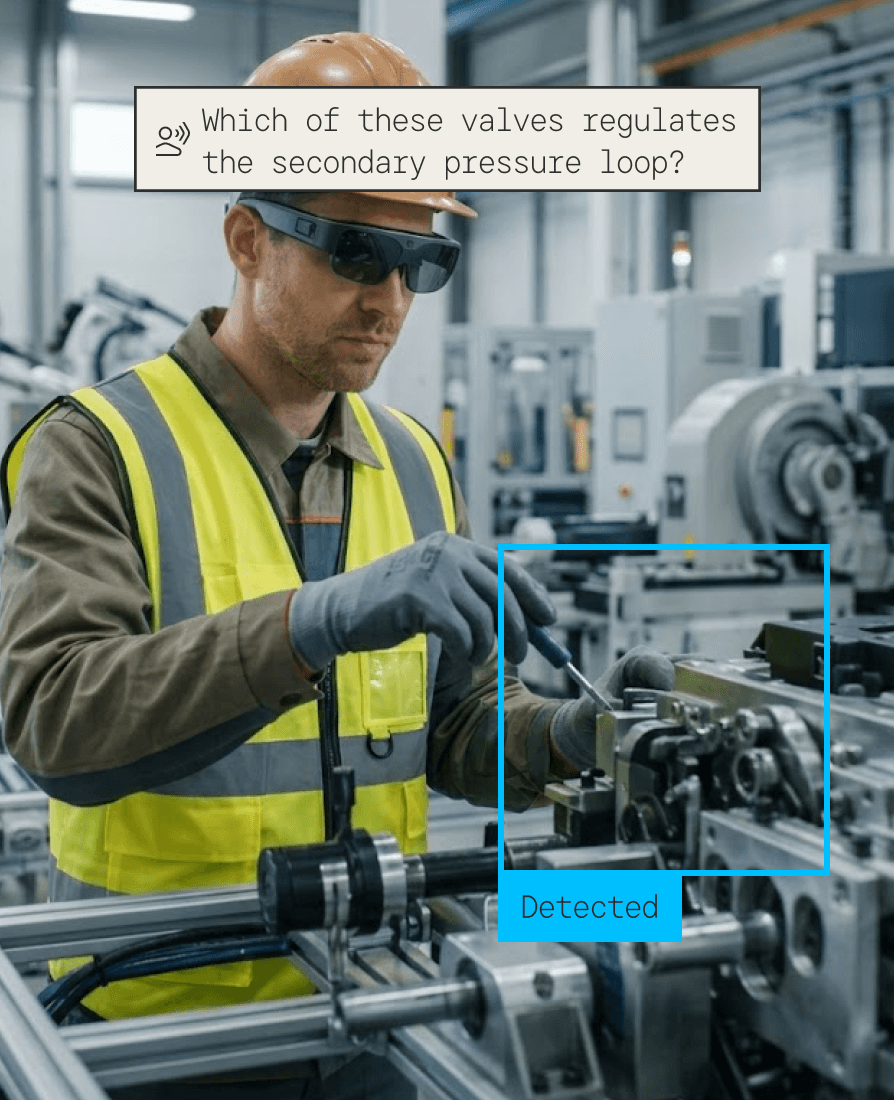

Problem

Physical AI requires fluid, continuous interaction with the unstructured world. Robots cannot wait seconds for a cloud VLM to tell them how to grasp an unfamiliar object. They need exact spatial coordinates and sub-second latency for control without awkward, dangerous stutters in movement or costly custom detector stacks.

Solution

Continuous Edge Perception: The robot's stereo cameras stream high frame rate visual data directly to internal edge compute (e.g., NVIDIA Jetson).

Contextual Encoding (Reka Edge): The ConvNeXT V2 backbone ingests the video. Highly efficient processing prevents the robot's onboard memory from overflowing during continuous operation.

Grounded Tool Localization: The model maps the environment, extracting precise bounding boxes for tools, target objects, and obstacles using conversational pointing (e.g., "Where is the 10mm wrench?").

Agentic Motor Control: Using its tool-use framework, the VLM actively orchestrates the robot's hardware APIs (e.g., adjust_grip_pressure(), move_arm_to(x,y,z)) to complete complex, multi-step tasks.

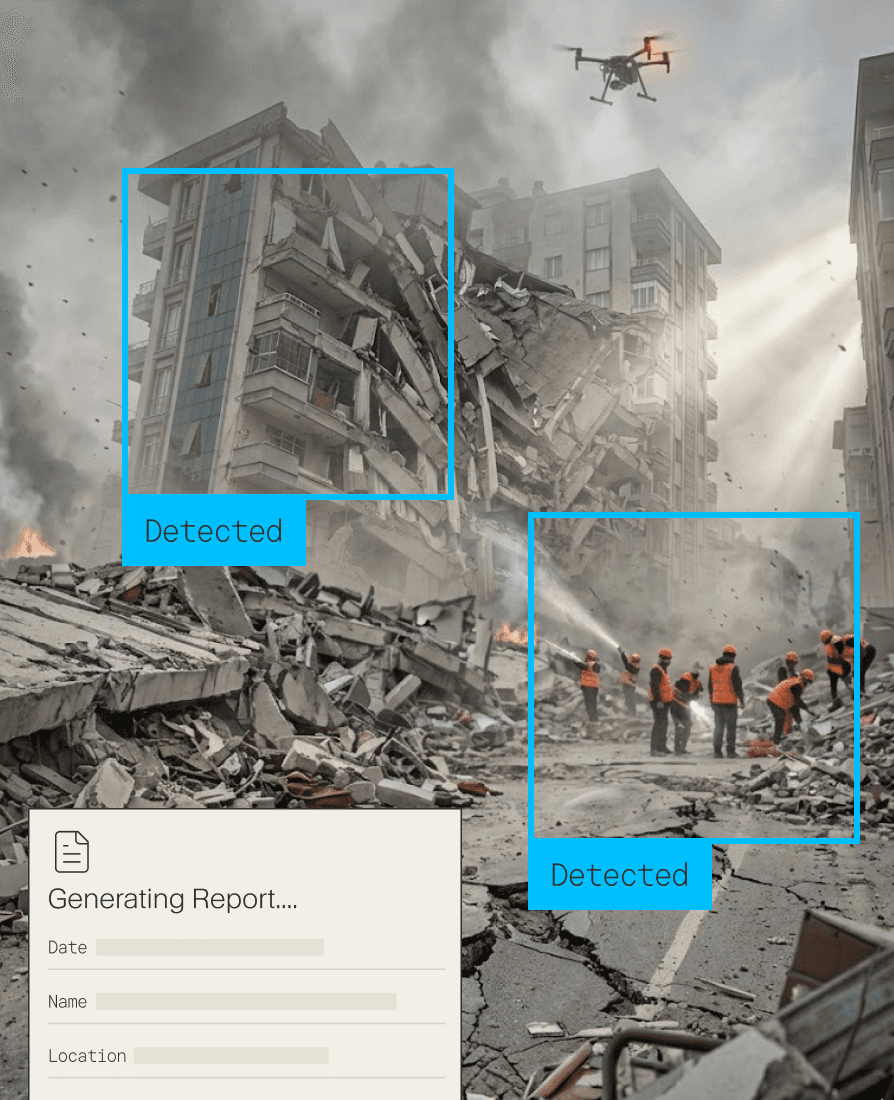

Problem

During search-and-rescue or reconnaissance operations, operators live in chaotic environments often completely devoid of cellular connectivity. Drones streaming HD footage back to a command center introduces lag and risks connection drops, while manual review of footage wastes precious, life-saving time.

Solution

Aerial Perception: Drones equipped with high-resolution optical and thermal cameras survey a disaster zone.

Token-Efficient Scanning: Reka Edge runs locally on the drone's onboard compute, continuously scanning the feed without being overwhelmed by the massive amount of visual data.

Anomaly & Human Detection: The model reasons over the chaotic visual data to distinguish between debris, wildlife, and human survivors, or identifies the visual signatures of a developing fire.

Autonomous Alerting: The VLM instantly logs the exact GPS coordinates and invokes flight control APIs to hover over the target, dropping a pin for ground teams.